DynaMITe: Dynamic Query Bootstrapping for Multi-object Interactive Segmentation Transformer

[Project-page] [arXiv] [Code] [Paper]

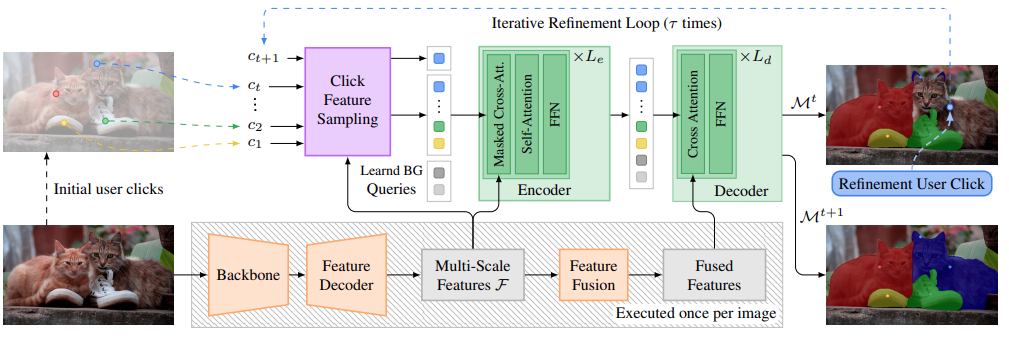

DynaMITe consists of a backbone, a feature decoder, and an interactive Transformer. Point features at click locations at time t are translated into queries which, along with the multi-scale features, are processed by a Transformer encoder-decoder structure to generate a set of output masks ℳt for all the relevant objects. Based on Mt, the user provides a new input click which is in turn used by the interactive Transformer to generate a new set of updated masks ℳt+1. This process is then iterated τ times until the masks are fully refined.

Abstract

Most state-of-the-art instance segmentation methods rely on large amounts of pixel-precise ground-truth annotations for training, which are expensive to create. Interactive segmentation networks help generate such annotations based on an image and the corresponding user interactions such as clicks. Existing methods for this task can only process a single instance at a time and each user interaction requires a full forward pass through the entire deep network. We introduce a more efficient approach, called DynaMITe, in which we represent user interactions as spatio-temporal queries to a Transformer decoder with a potential to segment multiple object instances in a single iteration. Our architecture also alleviates any need to re-compute image features during refinement, and requires fewer interactions for segmenting multiple instances in a single image when compared to other methods. DynaMITe achieves state-of- the-art results on multiple existing interactive segmentation benchmarks, and also on the new multi-instance benchmark that we propose in this paper.BibTeX

@inproceedings{RanaMahadevan23Arxiv,

title={DynaMITe: Dynamic Query Bootstrapping for Multi-object Interactive Segmentation Transformer},

author={Rana, Amit and Mahadevan, Sabarinath and Hermans, Alexander and Leibe, Bastian},

booktitle=arXiv preprint arXiv:2304.06668,

year={2023}

}